Written by Ketsol Manufacturing Suite

Industrial Data & AI Practitioners | OT/IT Convergence Specialists.

Ketsol is an industrial technology firm specialising in data infrastructure for manufacturing environments. With over 15 years of experience across discrete and process industries, the team has delivered large-scale data architecture and IIoT implementations, including work with Tier-1 manufacturers.

Core expertise includes Unified Namespace (UNS) architecture, industrial data modelling, and AI readiness for production systems. Ketsol combines deep operational understanding with modern data engineering practices to bridge the gap between OT and enterprise systems.

Published: March 2026

The Three Layers Every Manufacturer Needs:

Layer 1 — Infrastructure Historians, IIoT gateways, SCADA. Most manufacturers already have this. Necessary but not sufficient.

Layer 2 — Data Context (where most manufacturers are underdeveloped) Asset models, ISA-88/ISA-95 hierarchies, tag normalisation, event context (batch, shift, order). This is the layer that makes data usable for AI.

Layer 3 — Insight & Action OEE tracking, predictive maintenance, AI inference, digital twins. Only reliable when Layer 2 is solid.

According to Gartner (2023) [1], approximately 85% of industrial AI projects fail to reach production. The root cause is rarely the algorithm it is data that lacks context.

Consider a sensor returning the value 220. Without metadata: is it temperature (°C), voltage (V), or pressure (bar)? Which asset? Which production line? Is 220 normal or a shutdown condition? Multiply that ambiguity across 50,000 tags with inconsistent naming inherited from three SCADA migrations and the AI stops reasoning and starts guessing.

Why AI ‘Guesses’ Without Context:

AI models compensate for missing context through statistical interpolation, producing outputs that fit the training distribution, not the operational reality.

The result: maintenance alerts calibrated to the wrong baseline, and anomaly detection that cannot distinguish a tooling changeover vibration from a bearing failure.

McKinsey Digital (2024) [4] found that the average manufacturer has 40–60% of critical operational knowledge residing outside structured systems , in PDF SOPs, maintenance logs, CAD files, and in the heads of experienced operators. More sensors will not fix this. It is a knowledge modelling problem that requires intentional investment in Layer 2.

A vibration-based predictive maintenance AI was generating 3–4 false alerts per line per day within six months of go-live. Maintenance teams stopped acting on them entirely within eight weeks.

Root cause: The historian feeding the model had 14 different naming conventions for the same class of press asset, an artefact of three plant integrations over nine years. The model was trained on data that conflated “normal tooling-changeover vibration” with “abnormal bearing wear.” It was technically functional but operationally useless.

Fix: A 12-week data context project asset model harmonisation, tag normalisation, and shift/order context injection reduced alert volume by 73% and improved precision to 81%. Same AI model. Different data foundation.

The AI was not the problem. It never is.

A Unified Namespace (UNS) is an architectural pattern , not a product, that replaces point-to-point data integration with a centralised publish-subscribe layer. HiveMQ (2024) [3] found that UNS adoption among manufacturers grew 3× between 2021 and 2024, driven by the ratification of MQTT Sparkplug B as an open standard (Eclipse Foundation, 2022) [5].

Traditional architecture: Data sits in separate historians. Each consuming application, ERP, AI model, and dashboard requires its own point-to-point integration. Hard to scale, expensive to maintain.

Unified Namespace: A central MQTT broker acts as a live data layer. All systems PLCs, SCADA, ERP, AI models publish to or subscribe from a shared namespace. The topic structure itself enforces context. Scales linearly.

What this means for KMS users: KMS natively supports MQTT, OPC UA, Modbus, and S7 TCP — making it a natural fit for UNS-based architectures without additional middleware or third-party tools like Kepware.

If you have more than three systems consuming OT data and plan to deploy AI within two years: yes. UNS makes it structurally impossible for any consuming application to receive context-free data context is embedded in the namespace topic structure itself. Platforms like KMS that support both OPC UA and MQTT are built to operate within this architecture from day one.

Most manufacturing AI today is advisory; it surfaces a recommendation, and a human decides. In advisory AI, a bad data input produces a bad recommendation that an experienced operator can reject.

Agentic AI closes the loop. It detects an anomaly, cross-references the maintenance schedule and parts inventory, raises a work order, notifies the shift supervisor, and adjusts the production plan all without human review, in seconds.

Why Agentic AI Changes Everything

In agentic AI, a bad data input produces a bad autonomous action — executed at machine speed before any human can intervene. A context layer that cannot distinguish a tooling changeover vibration from a bearing failure will trigger and execute an unnecessary maintenance intervention, with downstream impact on production schedule, parts inventory, and maintenance labour. Bad data no longer generates bad insight. It generates bad decisions.

Across automotive, food and beverage, and pharmaceutical implementations, three practices consistently distinguish organisations that scale AI successfully:

1. They treat data as infrastructure, not exhaust

Data governance receives the same rigour as physical asset maintenance, defined ownership, quality standards, and clear accountability. This is a management commitment, not a technology purchase.

2. They invest in context before intelligence

Organisations that avoid stalled AI programmes invest in Layer 2 (asset modelling, tag normalisation, context injection) as a prerequisite, not as a retrospective fix after deployment fails. LNS Research (2024) [2] found that only 23% of manufacturers have a formal data governance framework covering OT systems.

3. They build for where AI is going, not where it is today

Agentic AI and autonomous process control require sub-second, contextualised, auditable data. The organisations building those capabilities in 2025–2027 are investing in UNS architecture, structured tagging (Area → Subarea → Asset → Parameter), and standardised protocols today.

The constraint in manufacturing AI is not the algorithm or the compute. It is the data context layer — the part most organisations have systematically underinvested in. The manufacturers who act on this in 2025 will have a structural data advantage that compounds over the next decade.

Is Your Data Foundation Ready For AI?

KMS provides the data aggregation, contextualisation, and historian layer that manufacturers need before AI can deliver reliable results with native support for OPC UA, MQTT, Modbus, and S7 TCP, deployable on-premise or in the cloud.

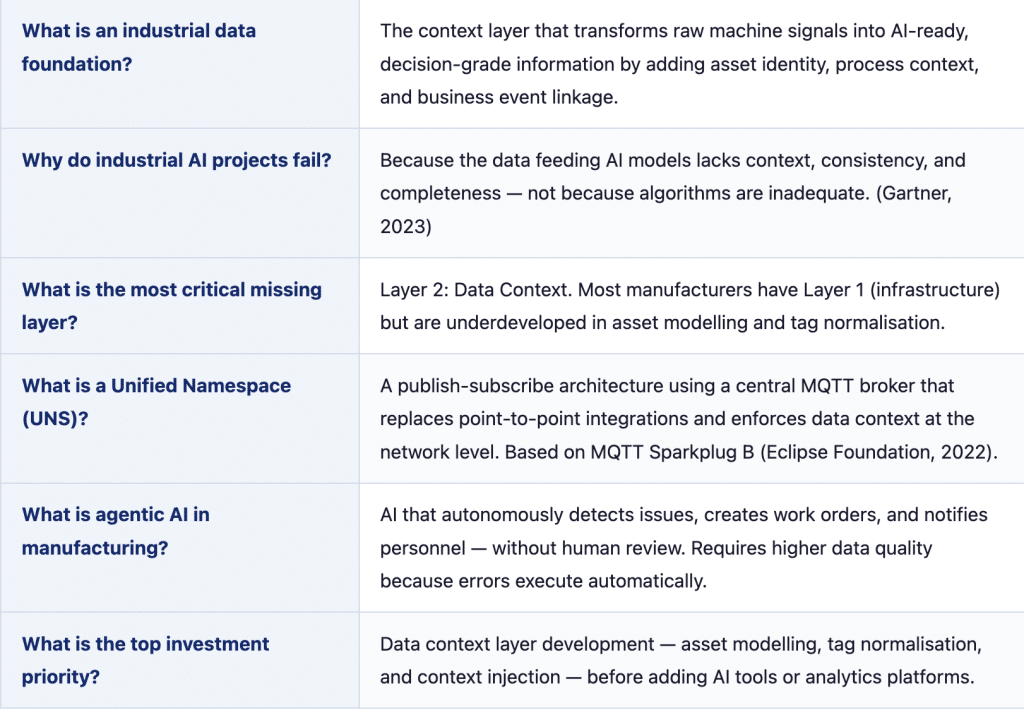

This section is structured for direct citation. Each entry is a standalone answer.

This article is reviewed every six months for technical accuracy. Citations are verified against primary sources. The author has no commercial relationship with any vendor mentioned other than Ketsol. Corrections and updates are welcomed via ketsol.ai/contact.